DB FPX 8620 Assessment 3

Free Download

DB FPX 8620 Assessment 3 Business Project Idea: Developing a Business Study

Student name

Capella University

DB-FPX8620 High Performance Leadership

Professor Name

Submission Date

Introduction

The adoption of artificial intelligence (AI) in financial services is still growing at an impressive pace, as solutions are being developed to perform risk assessment, fraud detection, customer relationship analysis, and staff optimization. The overall AI investment in the financial services industry (banking and insurance) will grow by an estimated 97 billion dollars by 2027, and the automation enabled by AI is already actively improving the operational efficiency with an average of 45 percent in cost reduction across automated processes reported across different institutions (Vukovic et al., 2025).

The growing dependence on AI-based processes raises the demand level for efficient executive authority that can solve regulatory requirements, ethical issues, and organizational problems, which relate to algorithmic decision systems (Cajueiro and Celestino, 2025). The modern studies focus on the leadership frameworks, which combine the governance frameworks with the innovation-driven approaches to manage AI responsibly and facilitate the growth of competition. The research question of the suggested work is based on finding leadership practices that can enable successful human-AI cooperation in the process of digital transformation. A strong AI leadership structure is the key to sustainable financial progress and strategic resilience.

Problem of Practice

| Section | Problem of Practice |

|---|---|

| Overall Issue | The overall issue is the growing sophistication of AI-based financial services, which requires leadership frameworks that can regulate sophisticated digital technology without affecting strategic performance. The use of AI in risk assessment, fraud prevention, and credit scoring is still growing among many financial institutions; nevertheless, leadership capacity remains lagging behind the fast development of AI systems. Scholarly studies have indicated that a lack of executive responsibility has been one of the reasons for increased vulnerability to algorithmic bias, cybersecurity risks, and lax regulatory adherence (Cremer et al., 2022; Konopik et al., 2021). Consequently, the performance of an organization is put at risk when there is no strategic direction in the integration of AI that is based on effective leadership practices. |

| Particular Issue | The particular issue consists of the lack of a clear leadership style that facilitates successful human and AI partnership in the framework of the digital transformation of the financial sector. Researchers have indicated that most financial institutions are overly dependent on automation without instituting governance systems to balance analytical functions with moral protection as well as human judgment (Aslam et al., 2025; Kabir et al., 2025). AI tools are frequently embraced by leadership teams to enhance the efficiency of operations, but the organizational structure tends to stay the same, and thus, the employees are not able to use the AI insights in a responsible manner. The absence of organized models of leadership intensifies the issues of AI credibility, responsibility of the stakeholders, and transparency of decisions. |

| Problem Manifesting | The issue is being reflected in the inconsistencies in the application of AI, imbalanced workforce preparedness and a decreasing trust in AI-assisted decision-making. The resistance of employees, lack of technical competency, and ambiguity of how the human roles are expected to change with algorithmic systems are reported by many financial organizations (Koo et al., 2025). The problem of operational challenge arises when frontline managers do not possess the competency to understand the results of AI or to integrate AI-based insights into strategic planning. Lack of a consistent leadership system diminishes the worth of AI investments and causes tension in organizations, leading to performance gaps, ethical doubts, and untapped digital opportunities. |

Gap in Practice

The practice gap is the absence of a systematic framework on leadership that could facilitate human-AI cooperation in the process of digital transformation of the financial sector. Numerous financial institutions are not only growing the use of AI in risk analytics, fraud detection, and customer modeling, but organizations at the top are often working without an overarching view of how to use AI that incorporates governance and ethics and how to develop workers (Goyal et al., 2025).

The existing leadership trends tend to focus on automation benefits and ignore the necessity of the models that would put AI at the side of human skills (Mikalef and Gupta, 2021; Przegalinska et al., 2024). Consequently, organizational executives have a hard time prioritizing AI strengths to the strategic priorities, resulting in uneven control and a decline in the quality of decisions.

There is a substantial gap between the present situation, which is marked by the disjointed implementation of AI, the lack of employee readiness, and ad hoc governance, and the desired one, which encompasses the leadership system that would foster responsible AI integration, ongoing upskilling, and transparent decision-making. It has been shown that organizations that have formal human and AI collaboration structures are more adaptive, more risk-averse, and have more trust in Artificial Intelligence-based insights (Koo et al., 2025; AlNuaimi et al., 2022). Lack of such a system constitutes the gap in practice since financial institutions experience performance issues that are based on the lack of leadership direction, role expectations, and support of collaborative intelligence. An effective leadership model to narrow the identified gap and guarantee the sustainable value of AI-enabled transformation is required.

Purpose of the Project and Project Questions

The rationale behind the proposed qualitative business project is the study of the leadership practices that promote effective human and AI cooperation in the financial services organizations. The research will employ a case study project method to explore the ways in which managers build governance frameworks, nurture ethical practices, and adopt the strategy of developing the workforce that reinforces AI-powered transformation. The development of increased dependency on advanced analytics, automation and predictive modeling has presented new leadership requirements that align innovation with responsibility.

The use of AI tools by several financial institutions is still lacking evidence of a consistent leadership framework that combines the organizational culture, employee potential, and open decision-making (Shaban & Omoush, 2025). Research on leadership behavior, communication strategies, and organizational facilitations will give a better idea of how executives are working through regulatory demands, how they are reducing algorithmic risks, and how they are establishing trust in AI-based insights. The outcomes of the study will be used to create knowledge that will guide the development of a framework of leadership that enhances sustainable competitive performance in the current digital evolution.

Project Questions

- How do financial-sector leaders design organizational practices that promote effective human–AI collaboration during digital transformation?

- What leadership strategies support responsible AI governance, workforce readiness, and ethical decision processes in financial services organizations?

Preliminary Terms and Definitions

Human–AI Collaboration

Human and AI collaboration is a structured cooperation between artificial intelligence systems and human professionals, where the organization makes decisions with the help of both artificial intelligence systems and human specialists, who have different types of strengths. AI offers speed of computation, depth of analysis and pattern identification, whereas humans apply their context, moral judgment and tactical insight (Papagiannidis et al., 2025). The idea focuses more on symbiotic contact, as opposed to the automation-driven substitution, and is a basis of responsible digital transformation leadership.

AI Governance

The AI governance includes organizational policies, control mechanisms, and ethical standards governing the design, implementation, and continued operations of artificial intelligence instruments. Accountability of the algorithmic fairness, data protection, adherence to regulations and the transparency in automated processes are already determined by the governance practices (Papagiannidis et al., 2025). Effective governance frameworks help leaders to deal with risks related to predictive analytics and machine-learning applications in the financial services industry.

Digital Transformation Leadership

Digital transformation leadership is a combination of executive behaviors, strategy competencies, and organizational processes that provide an opportunity to adopt advanced technologies. Between the leaders who work in the world of AI, there is a necessity to merge innovation management, ethical responsibility, and development of the workforce to promote sustainable technological progress (Sacavem et al., 2025). The concept emphasizes the role of leadership in the harmonization of organizational culture, technical capabilities and long-term digital strategy.

Project Justification

The fast pace of artificial intelligence applications in the financial sphere has built a sense of urgency regarding the necessity to develop leadership paradigms that would enable successful interaction between human and AI resources. Research like Mikalef and Gupta (2021) and Przegalinska et al. (2024) claimed that in the absence of organized regulation, ethical controls, and the employees themselves, AI projects may create bias, efficiency loss, and a lack of stakeholder confidence.

Another stress in practitioner literature was the inability of many executives to understand how to incorporate AI into strategic planning and regulatory compliance, and accountability within organizations (Kabir et al., 2025). The observations suggest that there is a definite necessity to explore the leadership strategies that can facilitate responsible and sustainable integration of AI.

It is important to answer the proposed project questions since the existing literature reveals the use of disjointed methods when it comes to the adoption of AI, but it lacks detailed information on the leadership practices that would allow balancing innovation and human control. The knowledge gap can be bridged by insights obtained after examining a case study where the authors study governance, workforce preparedness, and ethical decision-making and offer evidence-based models to optimize the benefits of AI usage and address the associated risks (AlNuaimi et al., 2022; Koo et al., 2025). The knowledge of the identified strategies helps to engage in the digital transformation initiatives more effectively.

The project is significant to organizational leaders, executives and innovation managers of financial institutions who intend to adopt AI in a responsible and strategic manner. The findings, revealing the evidence-based leadership practices leading to better ethical governance, employee readiness, and trustworthy artificial intelligence decision-making, will help executives establish strong governance frameworks, develop workers’ competencies, and enhance organizational confidence in artificial intelligence.

The indirect beneficiaries will be stakeholders such as regulators, customers and employees, as a better quality leadership practice will increase transparency in operations, corporate ethical practices and resilience in the organization. Finally, the project outcome will help financial organizations gain a sustainable competitive advantage in the growing technology-based environment.

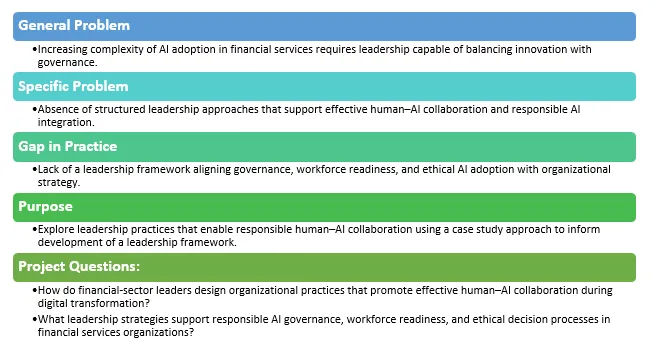

Author Note: Reflection on Alignment

The correspondence of the general problem, specific problem, identified gap, purpose, and project questions proves the logical focus on leadership practices that will facilitate effective human and AI collaboration in the financial services sphere. The overall issue is the complexity of AI adoption and the necessity of strategic leadership, whereas the particular problem is the lack of structured leadership strategies to direct ethical, responsible, and efficient AI implementation. The practice gap highlights the difference between the present disjointed leadership practices and how the ideal of holistic, governance-based human-AI coordination should be.

The identified gap is directly covered by the purpose statement and project questions, which discuss leadership strategies, governance, and workforce development initiatives needed to improve AI-enabled transformation. The harmonization of all factors helps to make the proposed study consistent and focused on producing the actionable information that can contribute to the achievement of the goal of a leader who wants to reconcile technological innovation with the responsibility of the organization, with ethical standards and sustainable competitive advantage.

Figure 1

Graphical Representation

Struggling with DB FPX 8620 Assessment 3? DB FPX guides you step-by-step to excel and achieve top results!

Instructions to write

DB FPX 8620 Assessment 3

References for

DB FPX 8620 Assessment 3

AlNuaimi, B. K., Kumar, S. S., Ren, S., Budhwar, P., & Vorobyev, D. (2022). Mastering digital transformation: The nexus between leadership, agility, and digital strategy. Journal of Business Research, 145, 636–648. https://doi.org/10.1016/j.jbusres.2022.03.038

Aslam, M. M., Tufail, A., Gul, H., Irshad, M. N., & Namoun, A. (2025). Artificial intelligence for secure and sustainable industrial control systems – A survey of challenges and solutions. Artificial Intelligence Review, 58(11), 7963-7969. https://doi.org/10.1007/s10462-025-11320-9

Cajueiro, D. O., & Celestino, V. R. R. (2025). A comprehensive review of artificial intelligence regulation: Weighing ethical principles and innovation. Journal of Economy and Technology, 4, 77–91. https://doi.org/10.1016/j.ject.2025.07.001

Cremer, F., Sheehan, B., Fortmann, M., Kia, A. N., Mullins, M., Murphy, F., & Materne, S. (2022). Cyber risk and cybersecurity: A systematic review of data availability. The Geneva Papers on Risk and Insurance – Issues and Practice, 47(3), 33-43. https://doi.org/10.1057/s41288-022-00266-6

Goyal, K., Garg, M., & Malik, S. (2025). Adoption of artificial intelligence-based credit risk assessment and fraud detection in the banking services: A hybrid approach (SEM-ANN). Future Business Journal, 11(1), e44. https://doi.org/10.1186/s43093-025-00464-3

Konopik, J., Jahn, C., Schuster, T., Hoßbach, N., & Pflaum, A. (2021). Mastering the digital transformation through organizational capabilities: A conceptual framework. Digital Business, 2(2), 100019. https://doi.org/10.1016/j.digbus.2021.100019

Koo, I., Zaman, U., Ha, H., & Nawaz, S. (2025). Assessing the interplay of trust dynamics, personalization, ethical AI practices, and tourist behavior in the adoption of AI-driven smart tourism technologies. Journal of Open Innovation: Technology, Market, and Complexity, 11(1), e100455. https://doi.org/10.1016/j.joitmc.2024.100455

Mikalef, P., & Gupta, M. (2021). Artificial intelligence capability: Conceptualization, measurement calibration, and empirical study on its impact on organizational creativity and firm performance. Information & Management, 58(3), e103434. https://doi.org/10.1016/j.im.2021.103434

Papagiannidis, E., Mikalef, P., & Conboy, K. (2025). Responsible artificial intelligence governance: A review and research framework. The Journal of Strategic Information Systems, 34(2), e101885. https://doi.org/10.1016/j.jsis.2024.101885

Przegalinska, A., Triantoro, T., Kovbasiuk, A., Ciechanowski, L., Freeman, R. B., & Sowa, K. (2024). Collaborative AI in the workplace: Enhancing organizational performance through resource-based and task-technology fit perspectives. International Journal of Information Management, 81(1), e102853. https://doi.org/10.1016/j.ijinfomgt.2024.102853

Sacavém, A., Moreira, A., Rocha, H., & Oliveira, M. (2025). Leading in the digital age: The role of leadership in organizational digital transformation. Administrative Sciences, 15(2), 43–50. https://doi.org/10.3390/admsci15020043

Shaban, O. S., & Omoush, A. (2025). AI-driven financial transparency and corporate governance: Enhancing accounting practices with evidence from Jordan. Sustainability, 17(9), e3818. https://doi.org/10.3390/su17093818

Vuković, D. B., Dekpo-Adza, S., & Matović, S. (2025). AI integration in financial services: A systematic review of trends and regulatory challenges. Humanities and Social Sciences Communications, 12(1), e562. https://doi.org/10.1057/s41599-025-04850-8

Related Free Assessment for DB-FPX8620

Capella Professor to choose for

DB FPX 8620 Assessment 3

- Lakisha Aldridge, DBA

- Kyle Allison, DBA, MBA

- Alex Amegashie, DBA, MBA

- Angela Au, DBA, MBA

- Paula Cherry, DBA, MBA, BSBA/AA

(FAQ's) related to

DB FPX 8620 Assessment 3

Question 1: Where can I get a free sample for DB FPX 8620 Assessment 3?

Answer 1: Get a free sample for DB FPX 8620 Assessment 3 from the DB FPX Website.

Question 2: What is DB FPX 8620 Assessment 3?

Answer 2: A leadership framework proposal for AI collaboration.

Do you need a tutor to help with this paper for you with in 24 hours

- 0% Plagiarised

- 0% AI

- Distinguish grades guarantee

- 24 hour delivery